The WWW Utils Module (tendril.utils.www)¶

This module provides utilities to deal with the internet. All application code should access the internet through this module, since this where support for proxies and caching is implemented.

Main Provided Elements

urlopen(url) |

Opens a url specified by the url parameter. |

get_soup(url) |

Gets a bs4 parsed soup for the url specified by the parameter. |

get_soup_requests(url[, session]) |

Gets a bs4 parsed soup for the url specified by the parameter. |

cached_fetcher |

The module’s WWWCachedFetcher instance which should be used whenever cached results are desired. |

get_session([target, heuristic]) |

Gets a pre-configured requests session. |

get_soap_client(wsdl[, cache_requests, ...]) |

Creates and returns a suds/SOAP client instance bound to the provided WSDL. |

This module uses the following configuration values from

tendril.utils.config:

Network Proxy Settings

tendril.utils.config.NETWORK_PROXY_TYPEtendril.utils.config.NETWORK_PROXY_IPtendril.utils.config.NETWORK_PROXY_PORTtendril.utils.config.NETWORK_PROXY_USERtendril.utils.config.NETWORK_PROXY_PASS

Caching

tendril.utils.config.ENABLE_REDIRECT_CACHINGWhether or not redirect caching should be used.tendril.utils.config.MAX_AGE_DEFAULTThe default max age to use with all www caching methods which support cache expiry.

Redirect caching speeds up network accesses by saving 301 and 302

redirects, and not needing to get the correct URL on a second access. This

redirect cache is stored as a pickled object in the INSTANCE_CACHE

folder. The effect of this caching is far more apparent when a replicator

cache is also used.

This module also provides the WWWCachedFetcher class,

an instance of which is available in cached_fetcher, which

is subsequently used by get_soup() and any application code

that wants cached results.

Overall, caching should look something like this :

- WWWCacheFetcher provides short term (~5 days) caching, aggressively caching whatever goes through it. This caching is NOT HTTP1.1 compliant. In case HTTP1.1 compliant caching is desired, use the requests based implementation instead or use an external http-replicator like caching proxy.

- RedirectCacheHandler is something of a special case, handling redirects which otherwise would be incredibly expensive. Unfortunately, this layer is also the dumbest cacher, and does not expire anything, ever. To ‘invalidate’ something in this cache, the entire cache needs to be nuked. It may be worthwhile to consider moving this to redis instead.

Todo

Consider replacing uses of urllib/urllib2 backend with

requests and simplify this module. Currently, the

cache provided with the requests implementation here

is the major bottleneck.

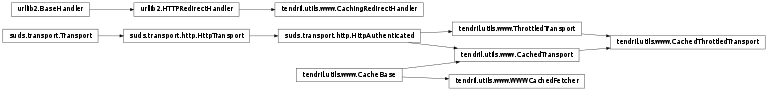

Class Inheritance

-

tendril.utils.www._get_http_proxy_url()[source]¶ Constructs the proxy URL for HTTP proxies from relevant

tendril.utils.configConfig options, and returns the URL string in the form:http://[NP_USER:NP_PASS@]NP_IP[:NP_PORT]where NP_xxx is obtained from the

tendril.utils.configConfigOption NETWORK_PROXY_xxx.

-

tendril.utils.www.strencode(string)[source]¶ This function converts unicode strings to ASCII, using python’s

str.encode(), replacing any unicode characters present in the string. Unicode characters which Tendril expects to see in web content related to it are specifically replaced first with ASCII characters or character sequences which reasonably reproduce the original meanings.Parameters: string – unicode string to be encoded. Returns: ASCII version of the string. Warning

This function is marked for deprecation by the general (but gradual) move towards

unicodeacross tendril.

-

tendril.utils.www.dump_redirect_cache()[source]¶ Called during python interpreter shutdown, this function dumps the redirect cache to the file system.

-

class

tendril.utils.www.CachingRedirectHandler[source]¶ Bases:

urllib2.HTTPRedirectHandlerThis handler modifies the behavior of

urllib2.HTTPRedirectHandler, resulting in a HTTP301or302status to be included in theresult.When this handler is attached to a

urllib2opener, if the opening of the URL resulted in a redirect via HTTP301or302, this is reported along with the result. This information can be used by the opener to maintain a redirect cache.

-

tendril.utils.www._test_opener(openr)[source]¶ Tests an opener obtained using

urllib2.build_opener()by attempting to open Google’s homepage. This is used to test internet connectivity.

-

tendril.utils.www._create_opener()[source]¶ Creates an opener for the internet.

It also attaches the

CachingRedirectHandlerto the opener and sets its User-agent toMozilla/5.0.If the Network Proxy settings are set and recognized, it creates the opener and attaches the proxy_handler to it. The opener is tested and returned if the test passes.

If the test fails an opener without the proxy settings is created instead and is returned instead.

-

tendril.utils.www.urlopen(url)[source]¶ Opens a url specified by the

urlparameter.This function handles redirect caching, if enabled.

-

class

tendril.utils.www.CacheBase(cache_dir='/home/chintal/.tendril/cache/soupcache')[source]¶ Bases:

objectThis class implements a simple filesystem cache which can be used to create and obtain from various cached requests from internet resources.

The cache is stored in the folder defined by

cache_dir, with a filename constructed by the_get_filepath()function.If the cache’s

_accessor()function is called with thegetcpathattribute set to True, only the path to a (valid) file in the cache filesystem is returned, and opening and reading the file is left to the caller. This hook is provided to help deal with file encoding on a somewhat case-by-case basis, until the overall encoding problems can be ironed out.-

_get_filepath(*args, **kwargs)[source]¶ Given the parameters necessary to obtain the resource in normal circumstances, return a hash which is usable as the filename for the resource in the cache.

The filename must be unique for every resource, and filename generation must be deterministic and repeatable.

Must be implemented in every subclass.

-

_get_fresh_content(*args, **kwargs)[source]¶ Given the parameters necessary to obtain the resource in normal circumstances, obtain the content of the resource from the source.

Must be implemented in every subclass.

-

static

_serialize(response)[source]¶ Given a response (as returned by

_get_fresh_content()), convert it into a string which can be stored in a file. Use this function to serialize structured responses when needed.Unless overridden by the subclass, this function simply returns the response unaltered.

The actions of this function should be reversed by

_deserialize().

-

static

_deserialize(filecontent)[source]¶ Given the contents of a cache file, reconstruct the original response in the original format (as returned by

_get_fresh_content()). Use this function to deserialize cache files for structured responses when needed.Unless overridden by the subclass, this function simply returns the file content unaltered.

The actions of this function should be reversed by

_serialize().

-

_is_cache_fresh(filepath, max_age)[source]¶ Given the path to a file in the cache and the maximum age for the cache content to be considered fresh, returns (boolean) whether or not the cache contains a fresh copy of the response.

Parameters: - filepath – Path to the filename in the cache corresponding to

the request, as returned by

_get_filepath(). - max_age – Maximum age of fresh content, in seconds.

- filepath – Path to the filename in the cache corresponding to

the request, as returned by

-

_accessor(max_age, getcpath=False, *args, **kwargs)[source]¶ The primary accessor for the cache instance. Each subclass should provide a function which behaves similarly to that of the original, un-cached version of the resource getter. That function should adapt the parameters provided to it into the form needed for this one, and let this function maintain the cached responses and handle retrieval of the response.

If the module’s

_internet_connectedis set to False, the cached value is returned regardless.

-

-

class

tendril.utils.www.WWWCachedFetcher(cache_dir='/home/chintal/.tendril/cache/soupcache')[source]¶ Bases:

tendril.utils.www.CacheBaseThis class implements a simple filesystem cache which can be used to create and obtain from various cached requests from internet resources.

The cache is stored in the folder defined by

cache_dir, with a filename constructed by the_get_filepath()function.If the cache’s

_accessor()function is called with thegetcpathattribute set to True, only the path to a (valid) file in the cache filesystem is returned, and opening and reading the file is left to the caller. This hook is provided to help deal with file encoding on a somewhat case-by-case basis, until the overall encoding problems can be ironed out.-

_get_filepath(url)[source]¶ Return a filename constructed from the md5 sum of the url (encoded as

utf-8if necessary).Parameters: url – url of the resource to be cached Returns: name of the cache file

-

_get_fresh_content(url)[source]¶ Retrieve a fresh copy of the resource from the source.

Parameters: url – url of the resource Returns: contents of the resource

-

fetch(url, max_age=600000, getcpath=False)[source]¶ Return the content located at the

urlprovided. If a fresh cached version exists, it is returned. If not, a fresh one is obtained, stored in the cache, and returned.Parameters: - url – url of the resource to retrieve.

- max_age – maximum age in seconds.

- getcpath – (default False) if True, returns only the path to the cache file.

-

-

tendril.utils.www.cached_fetcher= <tendril.utils.www.WWWCachedFetcher object>¶ The module’s

WWWCachedFetcherinstance which should be used whenever cached results are desired. The cache is stored in the directory defined bytendril.utils.config.WWW_CACHE.

-

tendril.utils.www.get_soup(url)[source]¶ Gets a

bs4parsed soup for theurlspecified by the parameter. Thelxmlparser is used. This function returns a soup constructed of the cached page if one exists and is valid, or obtains one and dumps it into the cache if it doesn’t.

-

tendril.utils.www._get_proxy_dict()[source]¶ Construct a dict containing the proxy settings in a format compatible with the

requests.Session. This function is used to construct the_proxy_dict.

-

tendril.utils.www.requests_cache= <cachecontrol.caches.file_cache.FileCache object>¶ The module’s

cachecontrol.caches.FileCacheinstance which should be used whenever cachedrequestsresponses are desired. The cache is stored in the directory defined bytendril.utils.config.REQUESTS_CACHE.

-

tendril.utils.www._get_requests_cache_adapter(heuristic)[source]¶ Given a heuristic, constructs and returns a

cachecontrol.CacheControlAdapterattached to the instance’srequests_cache.

-

tendril.utils.www.get_session(target='http://', heuristic=None)[source]¶ Gets a pre-configured

requestssession.This function configures the following behavior into the session :

- Proxy settings are added to the session.

- It is configured to use the instance’s

requests_cache. - Permanent redirect caching is handled by

CacheControl. - Temporary redirect caching is not supported.

Each module / class instance which uses this should subsequently maintain it’s own session with whatever modifications it requires within a scope which makes sense for the use case (and probably close it when it’s done).

The session returned from here uses the instance’s REQUESTS_CACHE with a single - though configurable - heuristic. If additional caches or heuristics need to be added, it’s the caller’s problem to set them up.

Note

The caching here seems to be pretty bad, particularly for digikey passive component search. I don’t know why.

Parameters: - target – Defaults to

'http://'. string containing a prefix for the targets that should be cached. Use this to setup site-specific heuristics. - heuristic (

cachecontrol.heuristics.BaseHeuristic) – The heuristic to use for the cache adapter.

Return type:

-

tendril.utils.www.get_soup_requests(url, session=None)[source]¶ Gets a

bs4parsed soup for theurlspecified by the parameter. Thelxmlparser is used.If a

session(previously created fromget_session()) is provided, this session is used and left open. If it is not, a new session is created for the request and closed before the soup is returned.Using a caller-defined session allows re-use of a single session across multiple requests, therefore taking advantage of HTTP keep-alive to speed things up. It also provides a way for the caller to modify the cache heuristic, if needed.

Any exceptions encountered will be raised, and are left for the caller to handle. The assumption is that a HTTP or URL error is going to make the soup unusable anyway.

-

class

tendril.utils.www.ThrottledTransport(**kwargs)[source]¶ Bases:

suds.transport.http.HttpAuthenticatedProvides a throttled HTTP transport for respecting rate limits on rate-restricted SOAP APIs using

suds.This class is a

suds.transport.Transportsubclass based on the defaultHttpAuthenticatedtransport.Parameters: minimum_spacing (int) – Minimum number of seconds between requests. Default 0. Todo

Use redis or so to coordinate between threads to allow a maximum requests per hour/day limit.

-

class

tendril.utils.www.CachedTransport(**kwargs)[source]¶ Bases:

tendril.utils.www.CacheBase,suds.transport.http.HttpAuthenticatedProvides a cached HTTP transport with request-based caching for SOAP APIs using

suds.This is a subclass of

CacheBaseand the defaultHttpAuthenticatedtransport.Parameters: - cache_dir – folder where the cache is located.

- max_age – the maximum age in seconds after which a response is considered stale.

-

_get_filepath(request)[source]¶ Return a filename constructed from the md5 hash of a combination of the request URL and message content (encoded as

utf-8if necessary).Parameters: request – the request object for which a cache filename is needed. Returns: name of the cache file.

-

_get_fresh_content(request)[source]¶ Retrieve a fresh copy of the resource from the source.

Parameters: request – the request object for which the response is needed. Returns: the response to the request

-

static

_serialize(response)[source]¶ Serializes the suds response object using

cPickle.If the response has an error status (anything other than 200), raises

ValueError. This is used to avoid caching errored responses.

-

class

tendril.utils.www.CachedThrottledTransport(**kwargs)[source]¶ Bases:

tendril.utils.www.ThrottledTransport,tendril.utils.www.CachedTransportA cached HTTP transport with both throttling and request-based caching for SOAP APIs using

suds.This is a subclass of

CachedTransportandThrottledTransport.Keyword arguments not handled here are passed on via

ThrottledTransporttoHttpTransport.Parameters: - cache_dir – folder where the cache is located.

- max_age – the maximum age in seconds after which a response is considered stale.

- minimum_spacing – Minimum number of seconds between requests. Default 0.

-

_get_fresh_content(request)[source]¶ Retrieve a fresh copy of the resource from the source via

ThrottledTransport.send().Parameters: request – the request object for which the response is needed. Returns: the response to the request

-

send(request)[source]¶ Send a request and return the response, using

CachedTransport.send().

-

tendril.utils.www.get_soap_client(wsdl, cache_requests=True, max_age=600000, minimum_spacing=0)[source]¶ Creates and returns a suds/SOAP client instance bound to the provided

WSDL. Ifcache_requestsis True, then the client is configured to use aCachedThrottledTransport. The transport is constructed to useSOAP_CACHEas the cache folder, along with themax_ageandminimum_spacingparameters if provided.If

cache_requestsisFalse, the client uses the defaultsuds.transport.http.HttpAuthenticatedtransport.